Abstract

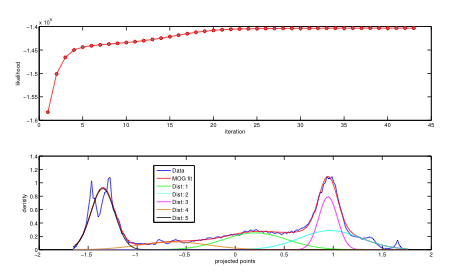

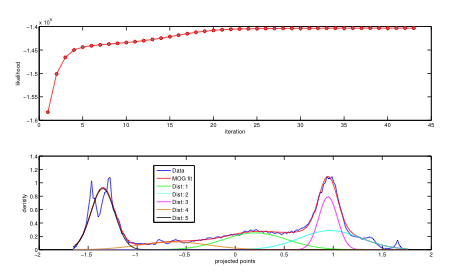

We extend the mixtures of Gaussians (MOG) model to the projected mixture of Gaussians (PMOG) model. In the PMOG model, we assume that q dimensional input data points z_i are projected by a q dimensional vector w into 1-D variables u_i. The projected variables u_i are assumed to follow a 1-D MOG model. In the PMOG model, we maximize the likelihood of observing u_i to find both the model parameters for the 1-D MOG as well as the projection vector w. First, we derive an EM algorithm for estimating the PMOG model. Next, we show how the PMOG model can be applied to the problem of blind source separation (BSS). In contrast to conventional BSS where an objective function based on an approximation to differential entropy is minimized, PMOG based BSS simply minimizes the differential entropy of projected sources by fitting a flexible MOG model in the projected 1-D space while simultaneously optimizing the projection vector w. The advantage of PMOG over conventional BSS algorithms is the more flexible fitting of non-Gaussian source densities without assuming near-Gaussianity (as in conventional BSS) and still retaining computational feasibility.

Download Code

Matlab code for estimating the PMOG model and performing PMOG based BSS can be downloaded here:

pmog_webpage_code_data.zip

. This software is freely available under the terms of the

license described below. Code distribution includes the following directories:

webpage

This directory contains a standalone version of this webpage

pmog.html for offline browsing.

code

This directory contains code for:

- estimating a mixture of PPCA model ppca_mm.m

- estimating a PMOG model projected_mog_ica.m

- doing orthogonal and non-orthogonal PMOG based BSS ppca_mm_ica.m and ppca_mm_ica_no_orth.m

- running simulations on synthetic data similar to that in the paper create_mog_source_mixture.m, projected_mog_ica_test.m

- component matching across multiple runs of BSS raicar_type_sorting_mat.m

- running the 3 demos (see below), pmog_demo1.m, pmog_demo2.m and pmog_demo3.m

data

This directory contains the following data:

- Natural image data used in the paper can be found in image_pmog_data1.mat and image_pmog_data2.mat. Each image was individually pre-processed by making it zero mean and unit standard deviation prior to further processing. Here's a step by step example of pre-processing. Code for pre-processing natural images from BSD preprocess_image.m is included. Here's a readme file for image data.

- Synthetic data can be found in synthetic_pmog_data.mat. Here's a readme file for synthetic data.

demos

This directory contains the results of running

pmog_demo1.m, pmog_demo2.m and

pmog_demo3.m that have been saved as

html files:

- pmog_demo1.m illustrates the application of PMOG based BSS to a synthetic mixture generated using the FastICA package based on the work of Hyvarinen et al. (1998). Here's a webpage containing the step by step procedure and results of running pmog_demo1.m.

- pmog_demo2.m illustrates the application of PMOG based BSS to a synthetic mixture generated using MOG source densities using the included code create_mog_source_mixture.m. Here's a webpage containing the step by step procedure and results of running pmog_demo2.m.

- pmog_demo3.m illustrates the application of PMOG based BSS to natural image data from the Berkeley Segmentation Dataset and Benchmark (BSD): http://www.eecs.berkeley.edu/Research/Projects/CS/vision/grouping/segbench/. This data is also included in the above zip file (in a Matlab .mat file called image_pmog_data2.mat).

In pmog_demo3.m we use non-orthogonal PMOG to account for the fact that the sources are in fact not exactly independent. Here's a webpage containing the step by step procedure and results of running pmog_demo3.m. This demo essentially regenerates results shown in Figure7 [BSS performance for natural image dataset 2] from the paper.

Software for PMOG based BSS by

Gautam V. Pendse is licensed under the terms described in the file

license.html. Permissions beyond the scope of this license may be available at gautam dot pendse at gmail dot com.